As everyone is becoming more aware of it, especially in an ongoing pandemic, social media plays a significant role in many lives. Social media networking sites have made communicating and connecting to the world effortless for us. Be it Facebook, Whatsapp, Instagram, Twitter, or Youtube — these big names have become synonymous with our idea of social interactions and communications with each other. As social media is becoming a more integrated part of our lives, it’s time to question how much we actually know about how these tech giants work and how they rope us in.

The designing of the algorithm

To keep the platform exciting and to maintain the constant attention of the user, it is essential to employ a tool that suggests and provides relevant content to each user depending on their preferences and usage history. The social media algorithms use mathematics and machine learning to create a selection of posts most suited to your interests.

Why do companies spend millions of dollars developing such complex algorithms and constantly fine-tuning them over the years? These platforms want their users to spend maximum time on them. Hence, they aim to provide content that matches the user’s interest and engages them. The more time people spend, the more ads they see, and the more these platforms can profit. Recently, all platforms have been committing to creating a more meaningful experience for users and weeding out irrelevant content.

For example, Facebook has a system of ranking posts based on some ranking signals. These ranking signals could be the collection of data points amassed by the past behaviours of the user.

In simpler terms, which posts does the user like the most? Who are the users interacting with them the most? These are some of the data points that are used.

Instagram, on the other hand, showcases posts of accounts that the user has frequent interactions with. It also shows the recent posts at the top of the user’s feed. Hence, users see the latest content from their preferred accounts.

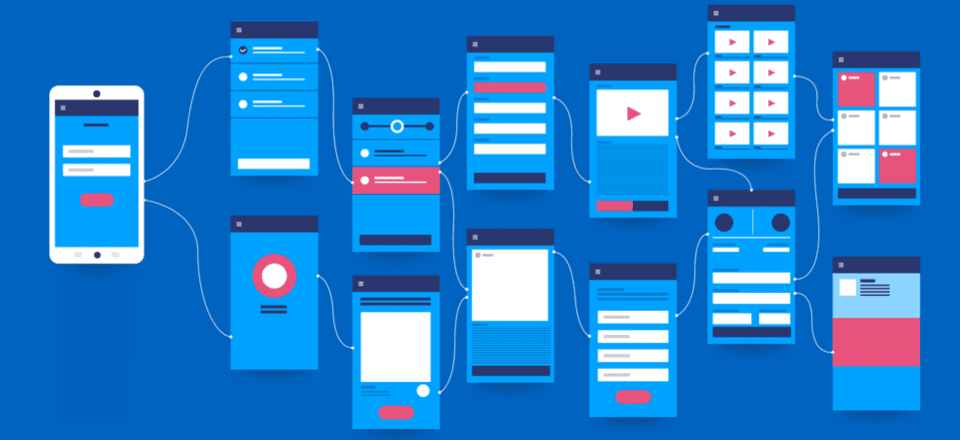

How are user interfaces designed to manipulate users?

User fatigue is one of the biggest concerns while using algorithms to create a user interface. An interactive generic algorithm monitors the amount of time the user is spending on the platform, the type of content they are interested in, and much more. For example, if a user scrolls through posts incessantly, the algorithm switches it up and showcases fresh content; hoping to hold the user’s attention. These features are very easily observable on our day-to-day applications like Instagram, Youtube, and more.

What are cookies, and how do they affect our user experience?

In simple words, a cookie is the collection of partial text that is read and recorded by websites. For example, when we log in to a website, the contents of the website are personalised and customised to our preferences, and are displayed to us. These are called first-party cookies and are a lot safer.

In other cases, however, the ads and pop-ups on websites, that don’t directly enhance the user experience of the website, tend to be less safe. They collect user data, which might not be consented to, and even compare them to other data that they have stored from multiple different websites that display their advertisement. These are commonly known as third-party cookies. A few cookies we come across are hard to get rid of and continue recording data even when the page isn’t in use.

Flash cookies and Zombie cookies are the worst of the lot. Functioning independently from the web browser, flash cookies reside on the user’s device permanently, gathering data even offline. Zombie cookies, on the other hand, are hard to be deleted and reappear on devices even after multiple removal attempts. These varied ways of continued data collection can be harmful in more ways we realise.

This collected data helps the websites create a database on their users based on habits and interests; leading to more advertisements, leading to more data collection, and the cycle continues. These databases often are the precursor to manipulation, extortion, and abuse of cyber safety. The collection of data hasn’t made much of a change in the way people use websites. However, it has created a debate on whether this crosses the line and falls under ‘invasion of privacy’.

Personalisation of advertisements

Many bigger corporations and websites strive to personalise content for their users, which is again achieved by the use of cookies. Recommendations such as ‘next up’, ‘you might also like’, ‘users also bought’, etc. are again the result of data collected by cookies. Cookies are what get us stuck in the loop and hooked on to the endless scrolling on the websites. These cookies can be observed on shopping websites like Amazon, streaming platforms like Youtube and many others. Established websites and businesses often have privacy statements and declarations on how the cookies on their platforms collect information and function to safeguard themselves from any discrepancies.

Precision and use of data

These models have had the opportunity to develop over time due to years of data assessment and trials. Apps have been asking the users about their preferences in their timeline and also been studying them to predict their future behaviours. When we think carefully about what is happening, the algorithm is drawing conclusions about our psyche by analysing a massive collection of data points gathered through user interactions. Some platforms have such large databases that the predictions of preferred content are becoming more precise.

How is it affecting our minds?

Recently, many industry insiders are speaking about the addiction and the mental health problems that could quickly arise from being engrossed in social media. Have you ever felt that you picked up your phone and suddenly, 30 minutes just flew away? We can think of the algorithm as a reinforcement of our dependency on the media platforms. Each time we get content that interests us or intrigues us — we want similar content. The next thing we know, we are stuck in the loop, feeling emptier and unfulfilled. The algorithm itself is not devious — instead, it’s the people wielding it to create a market and increase profits.

The rise of false information and political unrest

Not only do these algorithms trap our attention, their methods of digging up alleged relevant content actually influence certain people. In turn, this might lead to a change in their opinions regarding political or global issues. That doesn’t mean the algorithm makers want to control anyone’s political or social notions; it merely indicates that the designs tend to recommend material that strengthens the user’s opinions.

Hence, if one stumbles upon some video or post of an idea or opinion, soon the person would find themselves clicking away at similar posts and starting to believe it. This is how political polarisation and fake news find their place on the internet.

A piece of absurd fake news can snake its way and flourish on social media quite easily. It directly extends to the creation and propagation of many different narratives on a particular piece of news or social issue. With so many different versions floating around, the algorithm does what it’s designed for — it sorts out the stories that are agreeable to a user or their friends’ and communities’ opinions. It thus shows information about an issue from limited perspectives.

Instead of an overall grasp of the issue, each person looks at a timeline tailor-made for them. This only facilitates building and consolidating polarised opinions and prejudices among people. It leaves room for manipulation to arise that may have substantial real-life implications.

A rather infamous example is the case of Cambridge Analytica in the 2016 US elections and Brexit. They targeted voters by using algorithms to map their psychology and found out who could be manipulated easily. Through social media, they deliberately fed polarising information, according to their own decisions, to the targets. The extent to which this was successful is debatable, but there was undoubtedly an effort to influence the voters and change the electoral outcomes.

A darker story is that of Myanmar, a nation that only recently got widespread accessibility to the internet. Since 2013, in a matter of a few years, internet availability increased from 10% to 90%. In 2016, their state-run telecom company and Facebook even entered a partnership, making Facebook the centre of the population’s online activities. This platform also became a spawning ground for baseless, false news and some Buddhist extremists spreading hate and unfair bias against minorities. This led to mass violence between the communities and 600,000 Rohingyas fleeing and taking refuge in Bangladesh. Social media and its algorithm facilitated the exponential spreading of misinformation and led to tragic consequences in real life.

All of this is not to say that these algorithms and social media at large are forces perpetuating only evil. For example, recently, an IIT Roorkee alumnus developed an algorithm to identify misogynistic posts on social media, creating awareness about the choices we make online. Tools like algorithms, cookies, and user-interface designs are developed to get the maximum possible interaction, larger user bases, and enhance a user’s experience. However, in the hands of a few people, these tools are used to create a monopoly in various fields. They are used to create situations with dire consequences such as disruption of democracy, political polarisation, and spreading of misinformation.

In this age of false information and scope for addiction, consumers need to understand the full potential of this data collection and the problems that arise with it. The exponential rates at which these algorithms evolve and their capability in handling massive collections of data are overwhelming for a human brain. They don’t merely act as tools but as forces used to control and manipulate minds, in turn dictating our choices and lives.

Written by Vasundhara Rathi and Anushka Bhattacharyya for MTTN

Edited by Rushil Dalal for MTTN

Featured Image by Samara Chandravarkar for MTTN

Image Sources: Google Images, tccpro.net

Leave a Reply

You must be logged in to post a comment.